CHALLENGING COMMON MISCONCEPTIONS ABOUT THE ROBUSTNESS OF ASCS AND PSS SACE DATA

Local authority (LA) staff involved in our earlier research and consultations frequently reported that they – or, more commonly, the decision-makers within their organisation (e.g. managers, commissioners) – viewed with the Adult Social Care Survey (ASCS) and Carers Survey (PSS SACE) with caution and felt that the survey data had limited value for the design and delivery of local services. In our own online survey, for example, the ASCS and PSS SACE were described as “just a tick box exercise”, “a key check that we are not getting things very badly wrong”, and “primarily a quality assurance monitoring tool rather than an evaluation tool to influence policy and practice”.

“The intelligence the surveys provides is not, on the whole, sufficiently reliable to be strong evidence for change in practice/policy”

[Local authority representative. Source: MAX online survey]

As a potential consequence of these views – and increasing time and resource pressures, and other barriers – we found that many organisations primarily used ASCS and PSS SACE data for accountability and benchmarking purposes (e.g. via survey reports and direct ASCOF comparisons).

Our recent discussions with LA staff indicate that these views and practices may still be widespread, and that we need to do more to encourage LAs to engage with the surveys and use the data to guide local decision-making and service improvements. This is, in fact, one of the key aims of our project blogs (the other is to show you how you can use the MAX toolkit) and, as it seems that some decision-makers may be concerned about the robustness of the data collected by the ASCS and PSS SACE, the purpose of this current blog is to outline the rigorous methodology underlying the development of the surveys, and outline why both can provide an invaluable opportunity for local research.

THE DEVELOPMENT OF THE ASCS AND PSS SACE FOLLOWED RECOMMENDED PRACTICE IN QUESTIONNAIRE DESIGN….

Both surveys were initially developed here at the Personal Social Services Research Unit (PSSRU)[i] and incorporated a number of different research methods. This included:

| Method | Description | Example from survey development |

| Literature reviews | Extensive investigations of academic and non-academic literature for a specific purpose (e.g. to establish what is known about a given subject). | To identify key aspects of quality and outcomes for carers, and to develop an initial set of questions for the PSS SACE). |

| Cognitive interviews / pre-testing | A ‘think-aloud’ and/or probing technique conducted with potential respondents to establish how they interpret and respond to specific questions. Used to establish the validity of new questions and whether any adjustments are required. | To ensure that the questions developed for the ASCS and PSS SACE were easy to understand and answer, and also measure what they were supposed to measure. |

| Focus groups | Open discussions with representatives from particular groups to collect their opinions or feedback on a specific subject, product or tool. | To create and test an ‘easy read’ version of the ASCS for people with learning disabilities |

| Stakeholder consultations | One to one and group discussions with representatives from relevant organisations (in this instance, from LAs, the government, voluntary and third sector organisations, and so on). | To ensure that the surveys reflected both national and local priorities. |

…. AND INVOLVED A RANGE OF STAKEHOLDERS

A wide range of survey stakeholders were involved in the development of the ASCS and PSS SACE. This included service-users and their carers, care home residents and managers, and representatives from local authorities, third-sector organisations and national government departments and bodies representing health and social care leaders (including the Department of Health (now the Department of Health and Social Care), Department of Local Government and Communities, the Association of Directors of Adult Social Services).

The use of multiple research methods and the inclusion of so many different stakeholders means in practice that: [1] the questions included in the ASCS and PSS SACE ask about the issues that service-users and carers, as well as regional and national representatives, have identified as important; and [2] that the data collected by the surveys can be used to fulfil local, as well as national, information needs and priorities in adult social care.

THE SURVEYS USE TESTED AND WELL-VALIDATED MEASURES

Two types of questions are included in the ASCS and PSS SACE: questions on individual outcomes, such as quality of life and satisfaction; and questions that can be used to interpret those outcomes, such as respondent age and self-reported health status.

Many of the questions have been drawn from existing surveys and measurement tools. The questions underlying the social care related quality of life (SCRQOL) and carer-reported quality of life (Carer QOL) outcomes measures, for example, are drawn from the Adult Social Care Outcomes toolkit (ASCOT). The ASCOT was developed specifically for the social care population, also by researchers at PSSRU, and has been previously tested on social care users (see www.pssru.ac.uk/ascot). Other questions included in the ASCS and PSS SACE were developed specifically for the surveys and were tested to ensure adequate validity.

The use of rigorously tested and validated questions means in practice that the questions included in the survey measure what they are supposed to measure, and that decision-makers can be confident that their ASCS and PSS SACE datasets provide an accurate reflection of the views and reported outcomes of the service users within their remit.

AND ONE FINAL POINT TO CONSIDER…

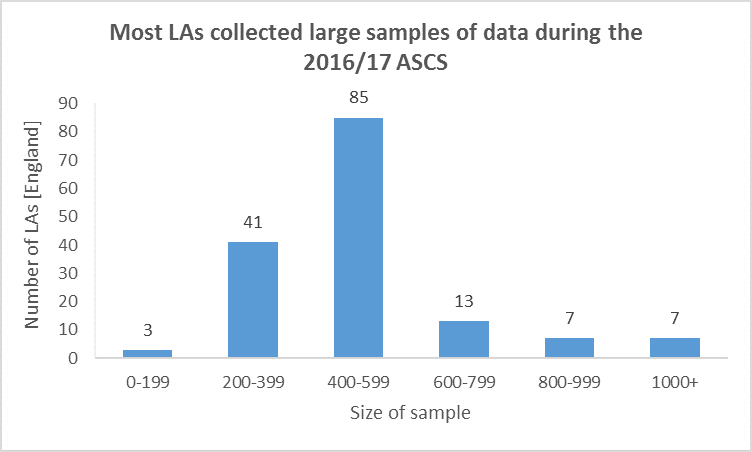

If this brief overview of the survey development has not convinced you to consider using the data – or more of the data – from the ASCS and PSS SACE, then consider this: the surveys are completed by large and representative samples of service users and carers within your remit. During the 2016/17 ASCS collection, for example, over half of the LAs in England achieved a sample of size of 400+ service-users (see chart below).

Data drawn from the data quality 2016/17 annexe, available at https://digital.nhs.uk/catalogue/PUB30102

This means that your ASCS and PSS SACE datasets can provide you and the decision-makers within your organisation with an invaluable opportunity for local research. Guidance on how to do this is provided in the MAX planning and analysis guides, and is also the subject of a number of our other blogs.

WANT TO FIND OUT MORE?

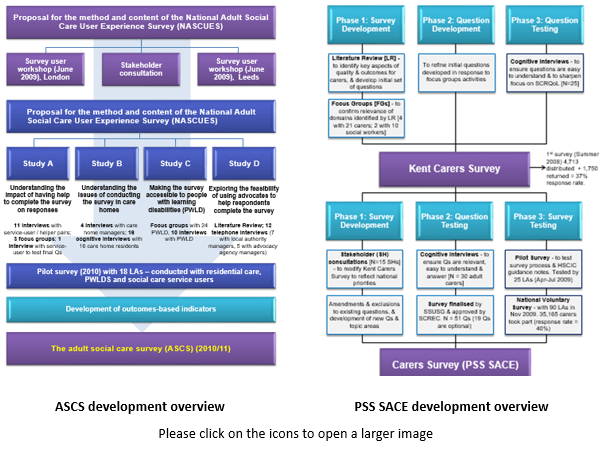

The development of the surveys are outlined in the figures below and the more detailed summaries included in the MAX toolkit can be accessed here [ASCS] [PSS SACE].

Future blogs will describe how local modifications can be used to improve the local relevance of ASCS and PSS SACE data, and how focused analysis can transform the survey datasets into meaningful management information. The next blog discussed why you should read the MAX planning guide and can be accessed here.

Disclaimers

This blog is based on independent research commissioned and funded by the NIHR Policy Research Programme (Maximising the value of survey data in adult social care (MAX) project and MAX toolkit implementation and impact study). The views expressed in the publication are those of the author(s) and not necessarily those of the NHS, the NIHR, the Department of Health and Social Care or its arm’s length bodies or other government departments.

_________________________________________________________________________

[i] The content and methodology of the ASCS and PSS SACE are now reviewed and updated by the Social Services User Survey Group (SSUSG), which includes representatives from NHS Digital, the Department of Health and Social Care, the Care Quality Commission (CQC), the Personal Social Services Research Unit (PSSRU), Councils with Adult Social Services Responsibilities (CASSRs) [or LAs}, and relevant carer and service user groups.